Search engines examine websites based on hundreds of criteria. Search engines perform these examinations in terms of software. The scope of all of these processes is called technical SEO practices. If we break down the elements for a technical SEO checklist, we may list them as such:

- Canonical link structures of websites

- URL structures of websites

- Robots codes

The items we have listed above are software issues. Here are the content and design criteria:

- Keyword densities

- Heading tags

- Alt tags

The most important ranking factors in terms of SEO are within the scope of technical SEO. So, do not expect success on a website that has technical SEO problems. You may perform the technical SEO checkup of your website yourself through this checklist we have prepared for you. You may also get help from many tools for technical SEO study. This way, you can make a smooth and more comprehensive analysis. Not solving your website’s technical SEO issues may also lead to many problems. The first issue you will encounter among these problems will be a decrease in your website’s ranking in search engines.

The second of these problems will be negative participation criteria. Not fixing your website’s technical SEO issues may also result in crawl errors. That may even result in your website disappearing from search engine results pages altogether. So, you should create an action plan to prevent all this. The ingredient of this action plan should be such that it does not waste the resources of technical SEO and the developer. Such an action plan will help improve your website’s ranking.

Search engines’ ranking algorithms change with new updates. For Google, this figure is over 500 a year. That’s why technical SEO studies have to renew themselves. So they may keep up with this updated rate of search engines. Details change during this process. However, the main goal is always to improve SERP rankings with website and server optimizations.

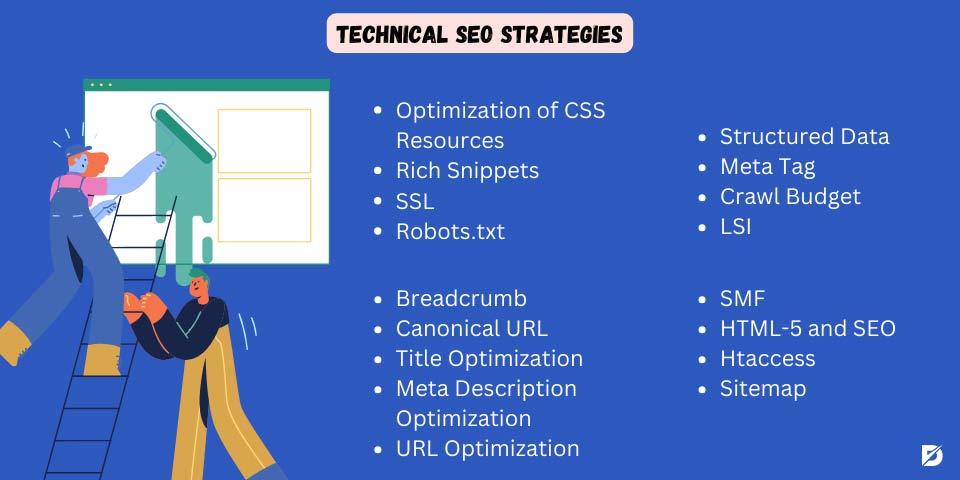

What Is Technical SEO?

Technical SEO is crucial for your website to be eligible to rank high in search results. So, if you do not have experience in SEO, you may be inadequate in technical SEO. If you fix technical errors on your website, your website’s SEO performance will be strengthened. We have prepared a very detailed guide for you on technical SEO strategies.

- Optimization of CSS Resources

- Rich Snippets

- SSL

- Robots.txt

- Structured Data

- Meta Tag

- Crawl Budget

- LSI

- Breadcrumb

- Canonical URL

- Title Optimization

- Meta Description Optimization

- URL Optimization

- SMF

- HTML-5 and SEO

- Htaccess

- Sitemap

Optimization of CSS Resources

In SEO, the speed of your website is quite essential to provide a successful user experience. While optimizing your website, you should make an intense effort on this subject. Today, the most critical factor affecting the algorithms of search engines is the user experience. That’s why you should also perform some work to improve the opening speed of your website. In this context, you should concentrate on the rendering blocking resources in Google tests.

If you are considering optimizing your CSS file, first reduce the file size. First, reduce unnecessary code and also use a tool that will allow you to compress files. You can organize CSS using the https bootstrap method. If you’re using Bootstrap and server submission, you have the chance to embed your CSS codes in a tag in HTML. If you want to be able to use the same CSS codes with less data, you can compress them on the CSS server side. You should also get rid of CSS properties that are no longer used in your code.

Related article about optimization of CSS Resources;

Rich Snippets

Rich snippets do not directly affect your website’s search result rankings. However, you can gain indirect benefits with the right strategies. This term refers to regular Google search results with additional data displayed. This extra data is taken from structured data contained in the HTML of a page. It can make your web page’s search engine results pages visually interesting. Also, it can make your web page stand out in search results. Rich Snippets increase the click-through rate as they are more likely to be clicked. You can add structured data codes to earn Rich Snippet. With these codes, you can help Google understand your page better. So, Google understands your page better and can rank it better for the right users.

SSL

An SSL certificate is a code structure that ensures your website is secure. It ensures that your web browsers show your website as secure. An SSL certificate also provides an encrypted connection to your website, which helps keep visitors’ information safe. Websites without an SSL certificate are shown as “Not Secure” in browsers. If you have an SSL certificate, it will be marked “Secure.” In this case, sites with SSL certificates are considered more reliable. You should obtain an SSL certificate as soon as you set up your website. You can obtain an SSL certificate from a paid or free hosting company or through various means.

This criterion has very high importance for e-commerce sites. Also, this criterion establishes a secure relationship between the website and the user.

Related article about SSL;

- How to Get an SSL Certificate

- ERR_SSL_Protocol_Error & How to Fix It

- SSL Connection Error: How to Solve It

Robots.txt

This Robots.txt file is located in the root directory of your website. Robots.txt files instruct your website’s search engine bots about which pages they can and cannot crawl. These files allow you to determine the pages on your website that you do not want to be crawled. If you use robots.txt files, you can also ensure efficient use of your website’s crawling budget. This file, like all other files, is located on your web server. You can access this file by typing /robots.txt at the end of the full URL of the home page. Create the robots.txt file on your computer and immediately upload it to your code structure by adding the instructions. Don’t forget to test the file you created.

Read related articles:

- Indexed, Though Blocked by robots.txt

- How to Create Robots.txt File

- Robots.txt in WordPress for SEO

- Robots.txt Sitemaps

Structured Data

Structured data is the process of organizing the data on your website so that Google search engine bots can better understand your website. You can create structured data codes to optimize your data via Schema.org so that Google bots can better understand your web pages. Search engine bots read structured data and make sense of the information they can present to users in search results. If you optimize structured data, bots can understand and list your web page in rich results. So, using this data, you can ensure that Google ranks your website most accurately and drive more traffic.

Meta Tag

Meta tags are groups of words that describe the content of your web page. Search engines use HTML codes to make sense of your web page. The meta tag contains information about the page, such as keywords and content title. Meta tags allow search engine bots to understand page content. It is a feature that gives search engines information about your website. Meta tags are not visible to end users. Search engine bots use these elements to index and present your content to users. Meta descriptions are strings of characters that describe the content of your web page and the information it provides. It also offers a chance to preview the search results pages. These tags must be unique and able to persuade users to click on the results pages.

Crawl Budget

Crawl budget is a term that reveals how many pages on your website Google bots visit and crawl per day. In order to use its assets efficiently, Google allocates a crawling budget for each site. The number of pages Google visits daily depends on its importance to your website. If your crawl budget is below a certain rate, your website will not be up to date in search results. Additionally, indexing of your new web pages may be delayed. You should definitely optimize the crawl budget. The crawl budget is determined depending on factors such as the total number of pages indexed in Google, the overall size of your site, the overall speed of your site, and the number of reference backlinks. You can optimize the crawl budget by getting more backlinks, eliminating unnecessary directories and redirects, and fixing problems in referral links.

Related articles about Crawl budget;

LSI

LSI means that the keywords and content you use in the content on your website have a certain harmony in terms of meaning. You should check whether your Google-indexed pages contain the keywords in user searches. When a user searches for information on search engines, websites containing the keywords written in the search bar are listed at the top. In summary, contents that semantically evoke each other are more prominent. LSI is an important SEO technique and gives importance to the semantic part of the content. LSI brings more traffic to your website. It also improves content quality and ensures that your pages are indexed on Google quickly.

It is one of the Google features that are getting smarter and more sophisticated day by day. With these studies, you may increase the traffic of your website.

Breadcrumb

Breadcrumb is a feature that allows search engine bots or real visitors to your website to understand the hierarchical order of your website. With this feature, you can better understand the layout of the main categories and subcategories on your website. If the layout of your website is complicated, the quality of user experience decreases. In this case, users do not want to spend time, and search engine bots have difficulty understanding your website. It may not be considered a very effective technical SEO element for small business websites and blog sites. However, it is very important for large sites with many categories, subcategories, and pages. It should be used and optimized, especially on e-commerce sites.

Related article about breadcrumbs: What Is Structured Data? (+How to Benefit From It?)

Canonical URL

A canonical URL is the HTML tag that tells search engine bots which page to see as the authority if there are two similar contents on a website. Canonical URL is an important SEO criterion. It is especially used to get rid of duplicate content and duplicate content problems on the website. You can declare your content to be taken as an authority by using this tag with rel=”canonical” on your website. As your website grows and the number of pages increases, content may be duplicated. In this case, you may have difficulty tracking duplicate content issues. However, by using this tag, you can indicate what should be taken into account between two URLs with similar content. The Canonical URL tag can also be used as a 301 permanent redirect.

Related article about Canonical URL: What Are the Advantages of Canonical URL?

Title Optimization

In SEO strategy, title tags play an important role. Title tags are HTML tag structures that help search engines evaluate how your web page is evaluated. If you optimize your SEO titles, your content will be ranked correctly, and bots will better understand your content. At the same time, other users can also have an opinion about your content. The most accurate length will be when titles are written between 55-65 characters on average. The entire longer title will not appear in search results. The main keyword should be used near the beginning of the title. You should write original headlines and try to include your brand in the headline names.

Related article about Title and Description;

- How to Write Creative Blog Titles and Headlines?

- How to Optimize Title, Description, and URL for SEO?

Meta Description Optimization

A meta description is a piece of HTML code that summarizes your web page on search engine results pages. There is a descriptive text section in the website list on the search results page in Google. This text can be viewed as a summary of your web page’s content. Meta description optimization is important for technical SEO. Although not a direct ranking criterion, convincing visitors can increase the click-through rate. This section should be clear, original, and related to the page content. Therefore, you can use some plugins on WordPress-based websites to optimize this section. To add a meta description, you can also add it with the code <meta name=”description” content=”. The meta tag must be a maximum of 155 characters and contain keywords.

Do not use the keyword stuffing method. Create it from the original text and include informative statements. At the same time, try to persuade users with guiding statements.

URL Optimization

The link structures displayed by your web page’s internet browser are called URLs. Every web page has a URL, and this is important for search engines and visitors. The page URLs on your website need to be SEO-friendly. With URL optimization, search engine bots can better understand your web page. Users can also get information about the page content by looking at the URL. URL structure is a very important ranking signal, and the Google Startup Guide recommends using simple and SEO-friendly URLs. The URL should consist of a maximum of 2-4 words and also contain the focus keyword near the beginning. Numbers, dates, and special characters should not be included. At the same time, unnecessary information should not be included in the URL. Only hyphens should be added as a special character between words. You should avoid URL parameters and keyword repetition.

Related article: How to Optimize Title, Description and URL for SEO

SMF

SMF, known as Simple Machines Forum, is a software that allows you to set up and activate a simple forum site in a short time. This software is used in PHP language and has themes and plugins with different templates. With SMF, you can open forums in different languages and benefit from advanced permission management for users. It stands out as an open-source application and has XHTML, XML, RSS, and WAP support. You can also automatically create or remove updates and add-ons. When making SEO adjustments, you should first make URL adjustments. Next, you need to create a robots.txt file. Then, make the necessary adjustments to use H1 tags. Set up generating meta tags. You can also create header and footer areas on your forum site. Finally, you can make .htaccess file adjustments.

HTML-5 and SEO

HTML-5 attracts attention with its new tags and content models. New tag structures may cause some changes in the SEO field. Thanks to HTML-5, it is possible to achieve very new SEO strategies and applications. First of all, you can perform improved page segmentation with HMTL-5. You can get a more functional website by grouping your pages according to user requests. Thanks to the Article tag, you can also provide information about the content of your web page. You can do this with the section tag if you want to section your pages. When adding a title, you can define the top part of your page by using the header tag. It is possible to use the footer tag to indicate the bottom line of your page. You can use the nav tag to edit in-site page links. HTML-5 allows you to use many functions in terms of SEO.

Htaccess

Htaccess file is a type of file used by certain network servers, and it helps you change settings on web domains. You can carry out all the different authorization and restriction processes on your website with this file. Htaccess files have an important place in technical SEO. With this file, you can add a password to a folder or perform authorization operations. Moreover, this file is a file type that you can create for your own website without any coding knowledge. You can make 301 redirects with the htaccess file. Also, it is possible to perform dynamic and static URL redirects. It allows you to solve URL problems on your website and also a file that allows you to optimize pages with 404 errors on your website.

It allows you to perform operations such as IP blocking, blocking hostile bots, domain redirection, data optimization, maintenance page, spam blocking, WWW configuration, SEO compatible link creation on your website.

Sitemap

Sitemaps are files that help search engine bots learn the URLs on your website that are suitable for crawling. Sitemap is an XML file that contains the URL of the pages on the website. Webmasters create a sitemap file and can control the pages that search engine bots can crawl. Sitemap is developed for search engines and users. It lists the pages on your website hierarchically. It also shows incoming links to your website. Thanks to Sitemap, search engine bots understand your website better and can also index all your pages. If you have a sitemap file on your website and report it to Google, your crawling budget will be used more efficiently. If there are pages that you do not want to be indexed, you can edit them with the Sitemap. Sitemap allows you to perform many SEO applications.

Related articles about Sitemap;

Detailed Technical SEO Checklist

Now that we’ve explained the scope and terms of technical audit SEO, it’s time to sort out our checklist!

Technical SEO Check List;

- Detect Your Mistakes

- Website Architecture

- Duplicate Content

- Schema Markup

- Website Speed

- Using HTTPS

- Htaccess 301 Redirect

- Crawling

- Cache

- Indexing

- Link Building

- HTTP Response Codes

- Mobile-First Indexing

Detect Your Mistakes

If you are going to arrange technical SEO on your website, you first need to detect errors. To do this, you can identify faulty pages on your website via Google Search Console. If you are going to plan technical SEO work on your website, the best approach is to first detect errors. Google Search Console allows you to perform a free analysis to detect technical SEO errors. You can also perform a technical SEO audit with analysis tools such as Semrush and Ahrefs. You can identify your technical SEO errors and fix them immediately. Then, many elements in the technical SEO checklist become seamless from an SEO perspective. You need to regularly analyze some elements in a technical SEO audit. Always identify your mistakes first and then look for ways to solve them.

Related article: How to Perform an SEO Audit

Website Architecture

That shows how information is structured on a website. Let’s explain this with an example. For this, let’s consider organizing web pages into categories. We may also consider the ways used to navigate between sections of the website. Website architecture affects how users experience your website. It also affects how search engines experience your website.

Let’s examine the features of websites that have bad architecture. These websites may have many dead-end pages. Besides, these websites may also have subdomains or subdirectories that are unwieldy. On the other hand, a website with good architecture does not use the browsing time unnecessarily. Search engines rank these websites faster on results pages. Let’s see what you can do to make your website have a good architecture.

First, make sure your website has an XML sitemap. Then, organize your website URLs in a logical flow. You can do this as follows. Organize your website URLs from domain to category to subcategory. This way, the new URLs that will come will fit this architecture. In the end, optimize your website URL structures for search. To do this, move the main keywords closer to the root domain. Also, do not keep the URL length longer than 60 characters.

Duplicate Content

This content is content that appears on multiple pages or domains in the same domain. Duplicate content issues are also important for a technical SEO checklist. These contents are not malicious. That’s why they don’t get penalties from Google. However, you still need to fix such content. There are several main reasons for this. Browsers need to know which version of a web page is the original or canonical page. Otherwise, they will not know which result to show in the search results. So, your web pages will not be in the rankings it deserves on the results pages. Also, your webpages may be in a lower order than the value for which it was filtered.

Related article: How Does Duplicate Content Affect SEO?

Schema Markup

This has no direct effect on search results. It is also called Structured Data or Micro Data. It helps determine the content of the page. Users search on Google for specific purposes. Schema markup enables search engines to serve users and website owners better. When you start using microdata, search engines will get to know your site better. This way, it may understand the content of your website better. Then, it provides more relevant search results to users. Schema markup allows your website to get much more engagement. So, you may get better rankings on Google or other search engines.

Use Dopinger’s brand new tool, Schema Markup Generator, for your content!

Website Speed

Let’s share the results of online research on this subject. If it takes more than three seconds to load a webpage, almost half of the users leave the website. Something happens when these users leave your website in this way. This behavior sends negative signals to search engines about the quality of your website. Google confirmed this in 2010. The speed of your website is an important signal that affects the ranking factor. There are several improvements you can make to increase the speed of your website. These are as follows:

- Minimize HTML, JavaScript, and CSS files. Combining them may also be helpful.

- Eliminate unnecessary code, such as line breaks.

- Tools like HandBrake and TinyPNG quickly compress large files. By using these tools, you may present your images in highly compressed formats.

- Reduce plugins, scripts, and redirect chains. The low number of codes and files on your website makes it open faster. You should keep the weight of your website and page requests to a minimum.

Related article about website speed;

- What is GTmetrix Speed Test?

- Google PageSpeed Insights

- What Affects WordPress Speed Performance?

- Page Speed and SEO Relation (How It Matters)

- How to Speed Up Your eCommerce Website

- Best WordPress Plugins to Speed Up Site

- How Does Duplicate Content Affect SEO?

Using HTTPS

HTTPS is a type of secure version that is the primary protocol that the web browser uses to send data back and forth with your website. This is a secure hypertext transfer protocol and allows you to encrypt data to ensure security during data transfer. It is an application that allows you to make reliable transactions on important issues such as bank accounts and postal services. Also, it is a very important SEO criterion for websites that process and transfer sensitive data, such as banking services, online retailers, and healthcare providers. Google started accepting HTTPS as a ranking criterion in 2014. You should use the HTTPS protocol on your website to secure users, data, and transactions. Using this on your website also positively affects the user experience.

Htaccess 301 Redirect

That is a control file that allows Apache server configuration changes. This file is located in the root directory of your website. If you don’t have this file, you may create it yourself. For a 301 redirect, you first need to locate the htaccess file. To do this, follow the steps below.

- Login to your FTP.

- Go to the root directory.

- Organize your file with software like Notepad. If this file does not exist in the root directory, create it.

- Add your 301 redirect commands to redirect a particular old page to a new page.

- You need to add another blank line at the end of the file. There is a reason for this. Your server reads this file line by line. For this reason, you must add a last blank line to the file to indicate that you have finished.

That’s all you have to do.

Crawling

Crawling is the process of search engine spiders browsing the pages of your website within certain time intervals. Search engine bots do this by following the links your pages give to each other within your website. It performs this process by following the links given to your web pages outside of your website. These bots are not able to spend time exploring every single page on the internet.

At this point, we may assume that they are progressing on the quality value they give to each website. This value that these bots assign to your website is called the crawl budget in the SEO world. The crawl budget has no specific numerical value. Thanks to this technical SEO checklist, you may use this budget efficiently. So, you may enable spiders to discover, crawl, and index your web pages more frequently.

Using the Browser Caching Feature

This feature is a feature that increases the opening speed of your website. This way, browsers do not have to load images and other resources on the site over and over again. When users enter the website after their first visit, browsers pull these resources from the cache for a specified period. Thus, users view the site much faster. The same is true for bots. When bots enter a webpage, recalling static resources each time that will not easily change causes extra waiting time on their behalf.

These static resources can be resources such as logos and favicon. You should offer bots that try to crawl your web pages to load all of your page content as quickly as possible. You may achieve this by activating the cache feature. So, for this, you must set an expiration date or a maximum lifetime in HTTP headers for static resources. These settings tell the browser to load those already downloaded on the local disk, not resources on the network.

Related articles;

Indexing

Spiders associate your web pages with the keywords on your website. Then, they add them to their index. This process is called indexing. It is one of the first terms that come to mind when it comes to a technical SEO checklist. We can explain the realization of the indexing process as follows. A user performs a search query on the search engine. The search engine does not perform the indexing process live during this time. First, it searches for page groups that were previously associated with the relevant search query and indexed. Then, it sorts the results related to the search query among them. Spiders consider many algorithmic factors when ranking these page groups.

They also consider a large number of ranking signals. This process is called ranking. The top 10 results that the search engine ranks as organic results on the first page are the most important results. Therefore, all your web pages should be crawlable and indexable.

Related articles about Indexing;

- How to Get Google to Index Your Site? (Easiest Way)

- Indexed, Though Blocked by robots.txt

- How to Make Google Crawl And Index Your Web Site?

- How Long Does It Take to Index a New Website?

- How to Fix “Discovered – Currently Not Indexed”

- The Most Common Indexing Problems and Their Solutions

- Google Indexing API

Link Building

These links are of two types: Off-site and On-Site. Off-site link building refers to off-site links. On the other hand, on-site link building represents links within the website. Link building is the process of getting links from other websites to your website. A hyperlink is a way users navigate pages on the internet. Search engines use links to crawl web pages. Search engine bots scan the pages on your website one by one and follow the links in them. There are many different techniques for link building. However, the connections created for today’s algorithms are of critical importance. The wrong actions you take may cause negative results for your website. There are a few techniques you can use to gain backlinks. Let’s talk about them now.

Create fun, high-quality, original, or unusual content on your website that people want to share. Before creating your content, determine who your target audience is. Once you create valuable content and know where your audience is, it will be easier to earn links. If your content is related to your users, they may link to your website. This technique is called internal links in a technical SEO website checklist. This technique redirects both Google bot and users by creating a hyperlink between pages.

HTTP Response Codes

That is the server’s response to a request made by the browser. So these are called HTTP response codes or status codes. These codes are one of the most obvious ways to see what is happening between the browser and the server. Search engine spiders read these codes to see the status of the relevant page. This happens when a browser makes the first request to the server to load a website. There are a few important codes among these codes in terms of technical SEO. These are 2xx, 3xx, 4xx, and 5xx response codes.

Related article: How to Fix HTTP Error 503

Mobile-First Indexing

Before this practice, desktop pages determined both mobile and desktop search results. The rates of determining factors, such as mobile click-through rates and mobile site speed, were lower. These data caused the same page to rank in desktop and mobile results with a difference of several positions. In 2018, Google made an announcement. In this announcement, Google announced that they had switched to mobile priority indexing. Thus, the content offered by the mobile pages began to determine the position of the desktop pages. In this regard, Google states that it will always have a single indexing method. In other words, there will not be an index separate from the main directory.

Today, Google indexed desktop pages more. However, over time, these pages will be replaced by mobile versions. Google Search Console will soon send messages to website owners about whether they have switched to mobile-priority indexing. Most websites have received this transition message.

In Google Search Console, you may see that the crawl rates made from Smartphone Googlebot increase over time. With mobile priority indexing, the mobile version of the relevant page will appear in the search results. For this reason, you should be ready in order not to lose traffic and position with the arrival of the update. So, it is of great importance to be ready for Mobile Priority Indexing. Based on this data, we may understand that page contents are the basis of mobile-first indexing. Therefore, you should make sure that all the content you offer in the desktop version is also available on mobile pages. The scope of these contents is as follows:

- Images

- Category Descriptions

- CSS and JS Resources

- Structured Data Markings

- Breadcrumb

- In-Site Link Setup

- All data types presented on the webpage

You need to make sure that you are presenting the content in the full version. You may use the mobile compatibility test for this.

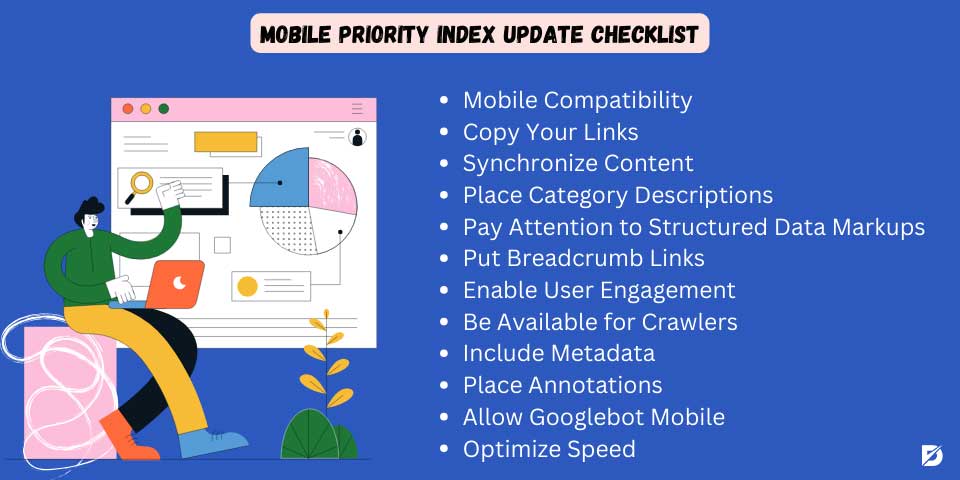

Mobile Priority Index Update Checklist

Mobile-first indexing is when the mobile view of your website is taken into account instead of the desktop view when ranking and indexing in Google. As of July 1, 2019, Google only considers the mobile version when indexing a web page. If you create a mobile-first index on your website, Google will be better at crawling and indexing your website. For a mobile-first index, you should optimize your website’s mobile performance. You also need to optimize your content for mobile experience. Additionally, you can use structured data and edit its metadata to suit mobile users. At the same time, it would be better not to use flash and pop-up windows.

- Mobile Compatibility

- Copy Your Links

- Synchronize Content

- Place Category Descriptions

- Pay Attention to Structured Data Markups

- Put Breadcrumb Links

- Enable User Engagement

- Be Available for Crawlers

- Include Metadata

- Place Annotations

- Allow Googlebot Mobile

- Optimize Speed

Mobile Compatibility

Google does not show websites that are not developed for mobile devices in SERPs. Therefore, your website must be mobile-compatible. The theme of your website should work smoothly on phones and tablets with Android and IOS operating systems. You need to design the page and menu designs on your website so that they are easy to click on mobile devices. At the same time, the font size should be readable on mobile. User experience on mobile devices is very important. You can apply responsive design to make your website work on different mobile screen sizes. The character count of your website’s title and meta tags should also be long enough to be displayed in mobile search results. Mobile-compatible websites are more advantageous when it comes to mobile-first indexing.

Copy Your Links

When you start working to make your website mobile-compatible, you should make sure that all your pages are also created for mobile. When you do link optimization, you should definitely copy your pages on mobile as well. In order for your devices to work smoothly in mobile browsers and mobile screens, you must create a mobile version of your links. In this way, all your pages, especially the home, product, category, and blog pages, can work smoothly in mobile browsers.

Synchronize Content

You need to optimize the content on your website for mobile. So, firstly, all products and product pages listed on mobile and desktop must be the same. All content on your website should be optimized for user experience on mobile devices. Therefore, it would be correct to create your content in short paragraphs. A paragraph length of 50-100 words is ideal for mobile device experience. Make sure your content appears smoothly on desktop and mobile. Don’t forget to optimize the multimedia content, such as images and videos on your page, to appear on mobile screens.

Place Category Descriptions

We said that Google implements a mobile-first version strategy when ranking your web pages. For this reason, you should share content on product and category pages so that search engine bots rank your web pages correctly. Create content for product and service pages on the mobile version of all pages on your website. Make sure that paragraphs and sentences are short, and do not forget to use title tags. Also, optimize your content for keywords. The better the product and category content in the mobile version, the higher your ranking performance on desktop and mobile.

Pay Attention to Structured Data Markups

Structured data is vital for search engine bots to understand the content on your web page. Therefore, bots that take into account your mobile version should be able to see all structured data on the desktop in the mobile version as well. Test all structured data in the desktop version of your website on the mobile version. Don’t forget to apply all structured data that will increase SEO performance to the mobile version of your web pages. Never forget to check structured data regularly and fix structured data that is not working.

Put Breadcrumb Links

If you have an e-commerce site or your website contains many pages, main categories, and subcategories, there are some strategies you need to implement so that visitors and search engine bots understand the website hierarchy. You can strengthen your website’s hierarchy by placing breadcrumb links in the mobile version of your web page. At the same time, a user visiting your website can navigate between your categories with a single click, no matter how deep they dive. A practical navigation experience when accessing your website from mobile devices will increase the visitor retention rate. Therefore, be sure to use breadcrumb links and optimize them for the mobile version.

Enable User Engagement

Involve users in the mobile version of your website. As the rate of users interacting with your website increases, the time they spend on your website will increase, and the bounce rate will also be positively affected. Let your visitors leave comments and reviews on your website. You can increase interaction on your website by including user-generated content. This will both increase the authority of your website in the eyes of the search engine and increase loyalty to your brand. When you optimize the user experience while optimizing your website’s mobile experience, this is perceived as a positive SEO signal. Create a comments section on the mobile version and allow your users to rate. Responding to comments and evaluations would also be a very good strategy.

Be Available for Crawlers

Users who want to visit your website will use different browsers. Therefore, your website needs to be optimized for different browsers on the mobile version. Make sure that it is available in the Safari browser for iOS users and in all browsers, especially Google Chrome, Opera, and Mozilla for Android users. Your website’s CSS and JS code structure must be configured smoothly in browsers. It can attract more visitors to your website when your device works in any browser. Moreover, in this way, the SERP results of your website will also improve. Try to make the mobile version of your website available, especially for browsers used on different mobile devices.

Include Metadata

Metadata gives your website a huge advantage in technical SEO. Your meta title and meta descriptions must be optimized for mobile devices. In Google search results, your meta descriptions are important for users to click on your page. So, metadata must be in sufficient characters for mobile devices. Also, meta descriptions should be between 110-120 characters maximum on mobile devices. For this reason, you need to create shorter meta descriptions on mobile devices than on desktop devices.

Place Annotations

There may be some problems with the pages and content on your website. At the same time, search engine bots may need to interpret your page correctly. Therefore, you need to create annotations such as canonical, previous-next, and hrefling for mobile pages on your website. Google may perceive similar content on your website as duplicate content. To avoid this situation, you can tell Google which of your content should be taken as the main content by using a canonical tag. Thus, you can prevent duplicate content problems. If there is complementary content on mobile pages, you can add buttons that will allow users to go to the previous or next page with a single click.

In this way, the user experience is strengthened, the bounce rate can be optimized, and also your visitors stay on your website longer. If your website is multilingual or you just target a specific geographical area with your content, you can use the hrefling tag for mobile pages.

Allow Googlebot Mobile

When search engine bots visit your website, they must understand your website’s hierarchy and have information about the pages they can or cannot crawl. Creating a site map is the most effective way to do this. Googlebot mobile must be able to access the Sitemap of your website. In this way, you can learn all the pages that need to be indexed for mobile pages and easily understand the general hierarchy of your website. If you allow Googlebot mobile, your website’s SERP activities will increase and become stronger. This reflects positively on your overall SEO strategy, especially technical SEO.

Optimize Speed

The speed of your website is very important on the internet. If a web page opens for more than 3 seconds, users close the window and click on another web page. That’s why you need to optimize site speed, especially on mobile. Measure your site’s mobile speed with the Google PageSpeed tool. Then, find and fix errors and apps that are slowing down your mobile speed. This tool, offered free of charge by Google, allows you to find many errors on your website. It also gives you detailed ideas on improving and optimizing site speed. When you optimize your website speed, you will start to see a significant increase in SERP performance.

Many tools automatically test this checklist we have listed for you above. You may access many of these tools free of charge on the internet. You may also get premium versions of these tools for your needs.

Completing the Technical SEO Work

Applying this checklist for SEO, as we have mentioned in our article, isn’t enough for technical SEO elements. So, you need to track and measure the impact of these regulations on your website over time. This way, you may also understand what technical SEO factors are helping your website rank. You may also see which of these factors have the most negative impact on your website’s rankings. Based on all these data, you may determine your new technical SEO checklist strategies for the future.

You will observe the positive effects of your future SEO optimization works on your website’s organic traffic. There is an app where you can get great help to complete your technical SEO work. This tool is called Google Analytics. By using this tool, you may measure the results of your technical SEO checklist, as we mentioned above. This tool also allows you to measure a wide range of traffic for your website. Let’s talk about how to use this tool.

This tool also provides the opportunity to compare the traffic measurements you have made at certain time intervals. This tool is a free service from Google. You must have important data in good hands to be able to create a healthy technical SEO strategy. This tool will be your biggest assistant at this point. Now that we have a basic knowledge of Google Analytics let’s share the Google Analytics checklist we prepared for you. Next, let’s share how you can evaluate Google Analytics data.

Our Other Checklist about SEO;

- Website Content Checklist: Guide for Beginners

- On-Page SEO Checklist

- Basic SEO Checklist: Guide to Perfection

- Everything You Need to Know About Keywords: Keyword Research Checklist

- Link Building Checklist to Drive Traffic to Your Website

- Website Redesign SEO Checklist for 2023

Google Analytics Checklist and How to Evaluate Google Analytics Data

Let’s first share the Google Analytics checklist with you. The best way to learn this tool is to make the necessary checks. First of all, it will be enough for you to do the following ten checks:

- Have you added additional platforms like Google Ads?

- Did you activate the demographics and interests reports?

- Have you enabled website searches?

- Can users find what they are looking for when they search the website?

- Have you created alerts that require quick action? These are notification settings.

- What is your rate of leaving the site?

- Have you blocked your own IP address and websites that send spam traffic to your website with the option of filters?

- Has there been a serious traffic change on your website in the last week?

- Which keywords and terms are more successful?

- What is the most successful content on your site? What is the reason for this?

As promised, we shared our checklist for this amazing tool. We will also now share how can you evaluate the important data provided by this tool. This tool provides instant access to many valuable data. These data are as follows:

- How do users reach your website?

- What kind of interaction did users have with your website?

- How many people have visited any page on your website?

- How much time did users spend on this page?

- Where do the people who visit your website live?

- What are the top-performing keywords?

Now that you know exactly what to do, here are two detailed guides that will show you how to perform on-page and off-page SEO.

Technical SEO Checklist in Short

SEO studies are very important for websites. Technical SEO is related to the technical aspect of these studies and has a wider scope. In this SEO technical checklist guide guide, we tried to explain the terms included in this scope. We have also tried to help you how you can evaluate these terms. As we mentioned in our article, evaluating this data is the most important part of technical SEO work. You may get help from several tools and apps that may help you in this direction.

As the most important of these tools, we have devoted a large place to Google Analytics. We also talked about how important your website is to be mobile-compatible in terms of technical SEO. We hope that our technical SEO guide will inspire you for your technical SEO analysis and also work. If you think you need professional help with this checklist, consider getting help from an SEO consulting company.

Frequently Asked Questions About

The scope of technical SEO is quite wide. This scope covers an important part of both On-Page SEO and Off-Page SEO studies.

In technical SEO studies, the landing pages of websites are also optimized for search engines. These pages have features that search engines’ algorithms consider in the result ranking process.

It is the text that appears highlighted in the hypertext link and serves to open the target web page. It is also a good practice to link to other articles and information using anchor text. That is a useful method for blog posts.

The title tag is a succinct description of a particular content of a web page. They help search engines understand what your page is about. So, it is the searchers’ first impression of your page.

For this, there are many services that you may get technical support for a fee. There are also paid tools that you can use in this regard.

No comments to show.