If you are familiar with SEO, you know that your web pages should be indexed to be on the SERP. Okay, but how is your website indexed on search engines? The invisible heroes are search engine bots. You can allow or not allow them to visit your website, thanks to robots.txt files.

Are you new to SEO and trying to learn how to crawl all pages of your website? We will explain the purpose of the robots.txt file and also share the standard rules you might want to use to communicate with search engine robots like Googlebot.

What Is Robots.txt?

The primary purpose of the robots.txt file is to restrict access to your website by search engine robots or Bots. The file is quite literally a simple dot txt text file that can be opened and created in almost any notepad HTML editor or word processor. Google defines robots.txt files with these words: “A robots.txt file tells search engine crawlers which pages or files the crawler can or can’t request from your site. This is used mainly to avoid overloading your site with requests.”

To make a start, name your file robots.txt and add it to the root layer of your website. This is important because all of the main reputable search engine spiders will automatically look for this file to take instructions before crawling your website.

How Does Robot.txt Work?

Search engines work for two main missions: crawling websites and indexing them. First, search engine bots follow the links and sitemap to crawl websites. Before crawling, the crawlers examine the robot.txt file and look for answers to how they should crawl the site. If there is no “disallow:/” code, they can index all websites, but if there is, some or all pages will not be indexed. Thus, you can allow search engine bots to crawl or not with robots.txt codes.

What Codes Should You Write in Robots.txt File?

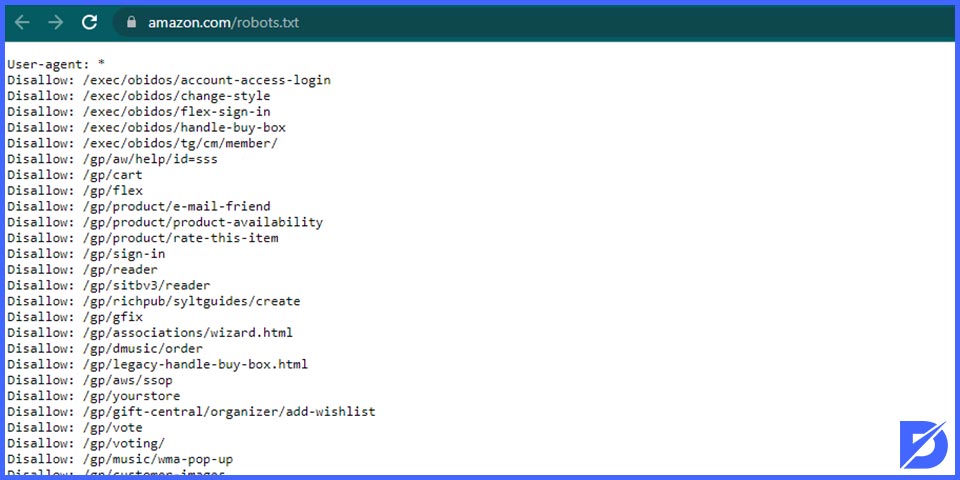

Now that you know the basic definition of a robots.txt file, let’s give some robots.txt examples with visuals for understanding how to create robots.txt file.

So here’s how the file should look. To start with, on the very first line, add the user agent colon star. This first command primarily addresses the instructions to all search bots. Once you have adjusted a specific, or in our case, with the asterisks all search bots, you come onto the allow and disallow commands that you can use to specify your restrictions. To simply ban the box from the entire website directory, including the homepage, you will add the following code: Disallow with a capital D, colon space, and a forward slash. This first forward slash represents the root layer of your website.

In most cases, you don’t want to restrict your entire website; you will work on just specific folders or files. To do this, you specify each restriction on its own line and proceed with the disallow command. In our example, you can see the necessary code to restrict access to an admin folder and everything inside it. The format is very similar if you want to restrict individual Pages or files. For example, in Line 4. We’re not restricting their entire secure folder, just one HTML file within it.

Also, you should bear in mind that these directives are case-sensitive. You need to ensure that you specify on your robots.txt file the exact match against your website’s file or folder name. The first example showed you the basics. In contrast, we come onto slightly more advanced pattern-matching techniques next.

They can be handy if you’re looking to block files or directories in bulk. And they can do so without having lines of commands on your robot’s file. We can also call these bulk commands pattern matching. One example of the most common one you might want to use would be restricting access to all dynamically generated URLs containing the question mark. For example, check Line 5. All you need to do to catch all of this type is the forward-slash asterisks and then a question mark symbol.

You can also use pattern matching to block access to all directories that begin with admin, for example. Furthermore, If you check Line 6, you’ll see how we’ve again used an asterisk to match all directories that start with the admin. Have the following folders: “admin – panel,” “admin – files,” and “admin – secure” on your root directory. This line will block access to all three folders as they start with “admin.”

The final pattern-matching command is the ability to identify and restrict access to all files that end with a specific extension. Finally, On Line 7, you’ll see how the single short command. It will instruct the search engine Bots and spiders not to crawl and, ideally, cache any pages that contain the dot PHP extension.

So after your first forward slash, use the asterisks followed by a full stop and then the extension. You conclude the command with the dollar sign to signify an extension instead of a string. As a result, the dollar sign will tell the Bots that the file to be restricted must end with this extension.

Conclusion

And this sums up our guide. Using those six different techniques, you should be able to put together a well-optimized robots.txt file. It can flag content to search engines that you allow for crawling or caching. We must point out that website hackers often check robots.txt files. So they can indicate a security vulnerability that may lie, which they might want to throw themselves at. Always be sure to password-protect and test the security of your Dynamic Pages. Particularly if you’re advertising their location on a robots.txt file. Also, to learn more about robots.txt files, you can check Google Support Page.

Frequently Asked Questions About

You need to use it to direct which pages and images will be crawled and which will not show up. As a result, the robots.txt file is certainly crucial for SEO.

It should contain Allow and Disallow commands.

It must be located at the root of your website host.

It is not compulsory because if you don’t have a robots.txt file, the search engine bots will normally crawl your website. However, sometimes you should direct some pages or not. In this situation, you need to navigate the bots.

If you don’t use a robots.txt file, the search engine bots will crawl your website normally and index the pages.

No comments to show.