Google adds many conveniences and innovations to human and internet life. The concept of a crawl page or crawl site is one of the most important of these. By crawling, Google aims to push your site or page to the top of the search results. If you want your site to be successful and frequently crawled by Google, you should learn to make Google crawl your site so start reading the article right away!

What is Crawling?

You can change to your Google Site or personal page, but after that, you should ask that Google crawl page process again. Google finds new pages by crawling the web and they do with the help of a web spider known as Googlebot. Crawling is the process of following hyperlinks on the internet to find new content. It’s a software method that creates a complete image of the content on a website. That image is used by search engines to guide website visitors.

What does this all mean? It means that you change to your website but Google still does not find the most recent image, people will be guided to your old content rather than your new content. This process is automated, but you can ask the company to re-craw the website. There are a couple of methods to ask that Google re-crawl your site but you shouldn’t send requests often, as this doesn’t speed up the process.

How to Make a Crawling Site?

The primary goal of any website established on the Internet is to rank higher in search results. Certain tricks must be performed in order to reach the top of the rankings. One of these, and perhaps the most important for site crawling, is a Bot-friendly website. Sites with crawlability make it possible for search engine bots to do their job. As a result, websites with crawlable links can reach a larger audience. So, how does crawl accessibility help a site rank at the top of Google SEO? We’ll go over it in a few easy steps.

Bots Need Proper Commands

As previously stated, Google bots transfer the site’s commands to the bots. This is how the system functions. These commands are observed in a variety of ways. It could be for your site’s robots.txt file or changes to the domain’s server settings, for example. Even as you prepare these commands, Google bots will crawl for specific bots on a regular basis. When your site ranks high in Google SEO, you will see the benefit of this.

Check The Server

It is impossible to use bots on sites with page and server errors. Since this will have a negative effect on SEO, you should first focus on fixing the errors.

Choose The Right Design

The movement of the website between pages is determined by site design through the use of links. As a side effect, you should focus on creating strong and numerous links to important pages, having at least one link on each page, and having few clicks to navigate from the main page to other pages. This includes the page that is the furthest away from the main page. Otherwise, it may not be able to crawl a page that you have yours on the website.

Use Apt Technology For SEO Bots

Users think Google has some loopholes. It says that in order to add certain add-ons, videos, and images, the content you will add should also be in the form of documents. In fact, if you only use Google and its tools, you need to make appropriate plugins and add-ons. If you do these, Google will crawl your site.

Using GSC URL Inspection Tool to Re-Crawl

So far, we have given information considering that you are in the process of creating a new site. So that when you create a new site, Google can do to crawl site process. However, you already have a site, but how to make a site crawl or crawl page? Or you made updates on your site and you need to re-crawl the process but how to make that? Under this heading, you will be able to find the answers to these questions.

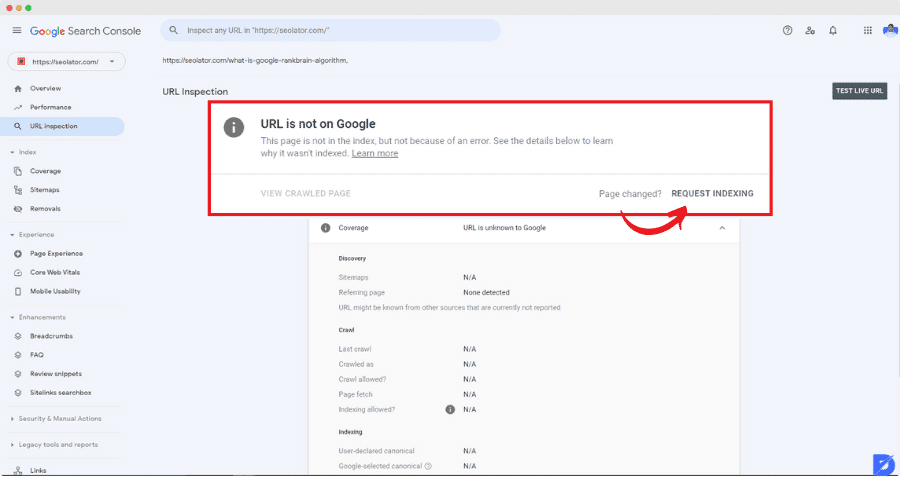

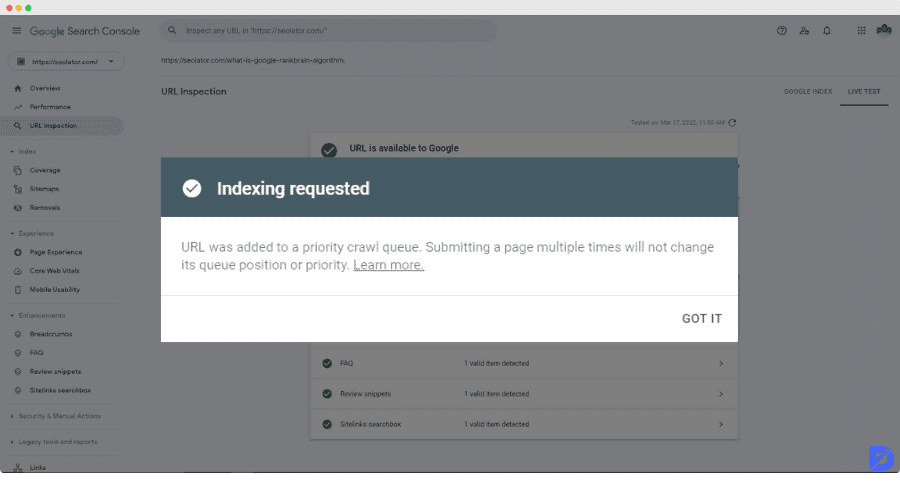

You can complete this in two ways using Google Developers. Both have something to do with the URL you’re using. First and foremost, if you are dealing with a small number of URLs, it is logical to use the URL inspection tool. To begin, use the tool to review your URL and then click on “request indexing.” If there is no problem, it will receive this command and begin the process immediately. If you want to crawl more than a few sites, however, you should use the submit sitemap option. Once again, Google Developers will assist you with this. You can do this process in Google Search Console.

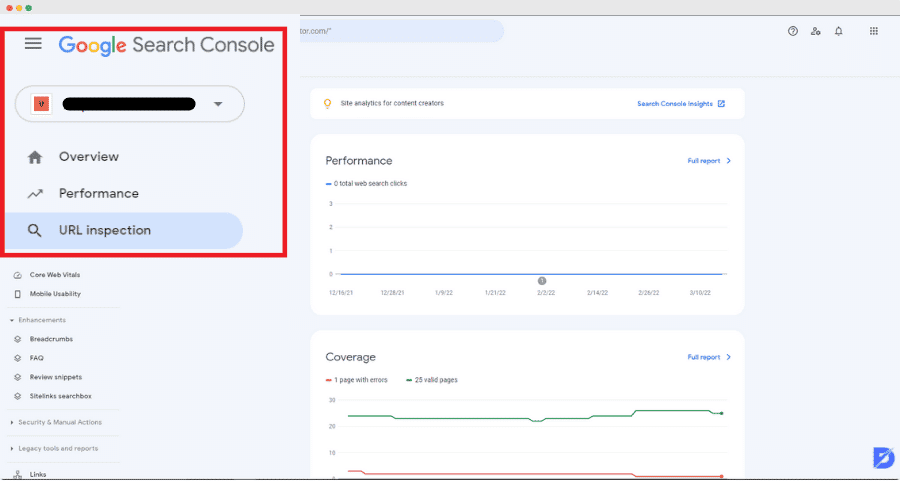

How to use URL Inspection Tool;

- First we enter Google Search Console Page and click ‘Start Now’

- Click URL Inspection located below ‘Overview’ & Paste The URL

- Request Indexing For Your URL

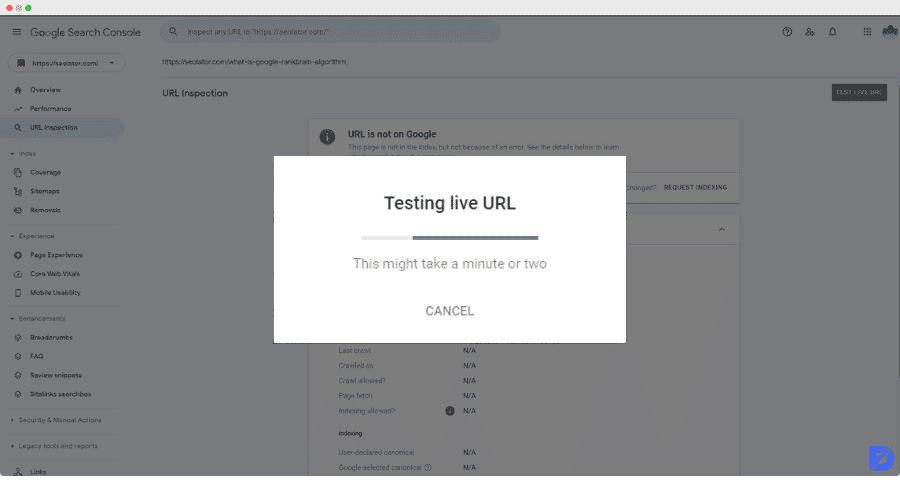

- Wait for Indexability test

- Wait for Google to Index

Crawlability Problems

There can be four main problems that will cause the Crawlability problem. It’s also a good idea to double-check them. First and foremost, the issue could be in robots.txt or meta tags. Broken internal links or a downed server could also be a problem. Aside from these, it is prudent to examine the site’s design. If you notice a problem with any of these, you must address it so that your actions are more effective. If you can solve these issues quickly, Google will crawl your site.

So, how does the problem-solving procedure work? Each topic title may contain a variety of problems. There could be issues with robots.txt or meta tags, for example. This problem occurs when you add content but the site crawler cannot follow the link. In this case, you must double-check all connections. If the issue is with broken internal links, it may display a URL error. It could be as simple as a typo. For each problem you encounter, go back over the steps you took to create it in the first place and solve it by locating the source of the problem.

Conclusion

As a result of this article, we sought answers to the questions of how to create a crawling site and, if you already have one, how to re-crawl it. Furthermore, we realized how important concepts like crawlability issues, site crawls, crawl pages, and site design are for a site. In light of this, we realized how powerful and visible the site can be. If you are going to create a new site or update an existing one, please read the subheadings and don’t forget to read the tips.

Click now to read our article about Google Indexing API, which is another way you can use to have the pages on your site crawled by Google bots!

Frequently Asked Questions About

It will be easier to explain in the form of items in their most basic form. First, check Google Search Console, then Analyze the URL, paste the URL you wish to search by Google, and finally click the requests crawl key

By performing a live URL test, you can gain a better understanding of this. Google Support can assist you with this. First, you enter the URLs of the site or pages, and then you proceed with the live test result by clicking on it. As a result of the test, you can find the answer to the question of whether your site has been crawled.

You may have recently set up the site and prepared it without adhering to the Google SEO content. Or, if you’ve recently updated, you might not have considered the phrase crawl page. As a result, in order to crawl or re-crawl, kindly direct to the article’s How to Make a Crawling Site and Re-Crawl sections.

You certainly can. If your site is in the process of being set up, you can go to the how to make a crawling section. If you already have a website, you can check the re-crawl section to have Google crawl it.

Poor Crawlability harms your SEO rankings, so you may not rank high. This means that neither Googlebot nor users can access the site. As a result, you will suffer the consequences.

No comments to show.