With the development of SEO, many different dynamics appear in optimization studies. One of them is word vectors. Word vectors have actually been in SEO work for the last few years. However, in parallel with the evolution of search engine algorithms, the effect of word vectors or word embeddings on SEO is increasing.

You can apply different types of word embeddings in your SEO work. So, what is the SEO benefit of doing this? How do word vectors work? What are the types of word embeddings? Let’s find out the answers to these questions together.

What Are Word Vectors?

We may define word vectors simply as a type of word representation. It grants words with identical sense to have an equal representation in content. Google uses it for natural language processing. In fact, Google uses this technique to represent words while analyzing text. This technique is a real-valued vector. It encodes the context of any word with numbers. In this way, NLP can detect which words have an identical meaning to others. Thus, it can understand the context of sentences and texts more easily and clearly.

The Word embeddings techniques are of several types. Two of them are quite popular. One is prediction-based embedding. The other is frequency-based embedding. Let’s take a closer look at them now.

Prediction-Based Embedding

As the name suggests, this method predicts the meaning of a word based on the context of the sentence. First, let’s look at what the context in this definition means. Then, we will explain it with an example. The context of the target word may be one word or more than one word. That is, the target word can have more than one context in the NLP system. That’s where the predictive power of the system comes into play exactly. Let’s say our example sentence to explain this situation is as follows:

The cat jumped over the puddle.

Now, remove the word “jumped” from this sentence. In this case, the system can predict that the word “jumped” should be in the center of this sentence. This type of model is called CBOW. It stands for Continuous Bag of Words. The model makes use of the hot representation of words while generating or predicting this word. The one-hot representation used by the model to generate or predict missing words based on the context of the sentence is simple. Despite the simplicity of this model, it still has a couple of weak points.

The most obvious of these weak points is that it is not possible to obtain meaningful results between vectors using arithmetic. This situation causes another important weak point of the model. Due to this situation, vectors tend to become quite high dimensions. Because the dimension is assigned for each word included in the vector. Naturally, as the number of words in the vector increases, the size of the vector will also increase. As a result, the larger the dimension, the more difficult it’ll be to guess or generate the word from the context of the sentence.

Frequency-Based Embedding

This model has a highly mathematical working method. It basically learns a vocabulary from all of the pages. Later, it models each one of them by counting the number of times each word appears. However, many words are commonly found in every text. Articles and auxiliary verbs are the best examples of these words. Such words are far from carrying clear information about the true context of the relevant text. Because there are many of them in every document. Therefore, the number of these words dwarfs the frequencies of more interesting and rare words that’ll provide clearer information about the context. Here, the TF-IDF transform helps to solve this problem of the model. It considers the occurrence of a word in the entire corpus, not just in a single document.

TF stands for term frequency. On the other hand, TF–IDF means term-frequency times inverse document-frequency. Simply put, this system marks high-frequency words in a document. Thus, it highlights words that will make it easier for the frequency-based embedding model to understand the context.

The model also uses the word’s co-occurrence matrix to make sense of the context of the text. Thus, it can capture what these words mean when they appear together. The operation of this model is simple. It counts how two or more words appear together or combined in a corpus. So, what are the application areas of frequency-based embedding?

Text classification takes first place among the areas of use. The model is also quite successful in sentiment analysis. However, with the development of NLP, the application areas of frequency-based embedding are becoming more widespread. The most important dynamic that plays a key role in the success of this model is the machine learning algorithm. Thus, the model can classify words easily and quickly.

What is Embedding Matrix?

Its dimensions are N. N means the size of the vocabulary + 1. They start randomly. The developer of the matrix must manually enter the N number. Each one of the columns in the embedding matrix represents a specific word found in the document. The developer must train the embedding matrix using gradient descent. Thus, it learns the values of the matrix with similar words are embedded together. Gradient descent teaches the features of words to the matrix. It does this utilizing the values corresponding to these features. At this point, you should not overlook that an embedded matrix starts randomly. So how can you teach non-numeric data to this matrix through machine learning?

Such a thing is not possible. Because it is not possible to use non-numeric data for machine learning, indeed. In this case, the matrix predicts that these words are non-numeric. Then, it attempts to transform these words. There are many algorithms that enable the embedded matrix to perform this operation. However, three of them are the most popular. These are:

- Tokenization (Text to Sequence)

- One Hot Encoding

- Term Frequency-Inverse Document Frequency

The algorithms we listed above are the most popular ones that help the embedded matrix predict non-numeric words. However, the most popular of these, and indeed the best working one, is tokenization. It uses a number assignment method for each unique word in the corpus. Values in the embedding matrix are nothing but parameters. The matrix learns them over time. Because this process takes place over time with large data entries. Let me notify you that once more, the biggest assistant in this learning process is gradient descent.

What Is the Purpose of Word Embeddings?

One of its biggest advantages is reducing dimensionality. Besides, word vectors are successful in predicting words around them by using another word. Thanks to this ability, it is more successful than other methods in capturing semantics between words. Because of these benefits, it has a key role in many studies. Using a word vector is pretty straightforward. Word embedding is input for machine learning models. If you are going to insert word embedding as input into machine learning, you can follow these steps in order:

- Identify the words you will add as input to machine learning.

- Then, determine the numeric representations of these words according to your own criteria.

- Finally, use them in inference or training.

Have you added word embedding as input to machine learning by following these three simple steps? So, also represent the underlying usage pattern of your corpus that you use to train your matrix. You may also visualize it. Thus, you may add them to machine learning to train your matrix too. Let’s take a closer look at the most famous word embedding algorithms.

The Most Famous Word Embedding Algorithms

Here we present some of the most known examples of Word Embedding Algorithms

Glove

It stands for Global Vectors for Word Representation. In fact, it is an extension of the Word2Vec method. Its developer is a Stanford student named Jeffrey Pennington. His friends also have a role in the development of the algorithm. Its main purpose is to learn word vectors efficiently. Glove’s developers used the LSA technique to develop it. LSA stands for Latent Semantic Analysis. It is one of the most popular matrix factorization manners. This technique is actually not that good at capturing meaning and displaying it in tasks. So, we may define its disadvantage as simply calculating analogies. You can see this clearly, especially if you compare it to learned methods such as Word2Vec.

However, it also has some successful aspects. That’s what makes it so popular. One is the ability to use standard vector space model representations of words. It also excels when it comes to using global text statistics. It has an approach to word embedding. Let me explain it simply. There is local context-based learning in Word2Vec. And we have already mentioned that LSA also focuses on global statistics of matrix factorization manners. Here, Glove’s approach combines these two.

It does not use a window to describe the local context. Instead, it makes use of a word co-occurrence matrix or an explicit lexical context. It does this by using statistics throughout the entire text. Working in this way highlights another advantage of it. This operation produces a better learning model. Therefore, the matrix will be able to make better word placements thanks to this learning model. We can define it as a new global log-bilinear regression model. Because it is quite successful in providing unsupervised learning of word representations. It is an undeniable fact that it performs much better than other models.

Embedding Layer

It performs learning jointly with a neural network model. It is also in a specific NLP task and performs this task in document classification and language modeling. Document text must be clean for the Matrix to learn. At the same time, each word that it will learn should be ready in one-hot encoded form. You can specify the dimensions of the vector space clearly. These dimensions models can be 300, 100, or 50. You also need to specify them as part of the model. It chooses small numbers randomly and initializes vectors by using them. Subsequently, it operates by using the embedding layer at the front end of one of the neural networks. It then fits the embedding layer in a supervised manner. While doing this, it needs to take advantage of the backpropagation algorithm.

So, which vectors does the model determine as parameters? Input to a neural network can include symbolic categorical properties. In this case, it usually continues by associating each possible property value. These are the model parameters. It continues to train them with others. And it continues to do so as it operates.

It continues to identify one-hot encoded words as it continues processing. As it identifies, it maps them to word vectors. However, a Multilayer Perceptron model is also commonly used in this algorithm. In this case, the process changes. First, it combines word vectors as inputs to the model. Then, you may provide the feeding.

The process is also different in algorithms using iterative neural networks. It takes each word as an input in an array in this case. As you can see, this approach mainly focuses on training the matrix to learn an embedding layer. One of its biggest disadvantages is the need for huge amounts of data for training.

Word2Vec

It is a method based on statistical data. It basically aims to learn an independent word embedding from a text. Word2Vec has a successful performance in doing this quite efficiently. Its developer is Thomas Mikolov. Mikolov’s friends also played a role in the development process. Google vectors learn by using the neural network method. Word2Vec aims to increase the efficiency of this training process. It uses pre-trained word embedding to accomplish this. It can also able to analyze the vectors it has learned before. Besides, it may explore vector mathematics on the representations of words. We can exemplify the superior success of the algorithm in capturing analogies as follows.

King is a title given to male rulers. If the gender of this monarch is female, it should capture to use of the word queen instead of the king. Experts describe its success as capturing semantic regularities and syntactic in language. In other words, it is quite successful in making relation-specific vector shifts.

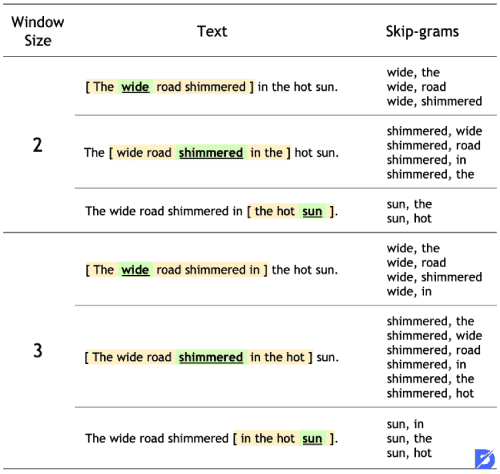

There are two main learning models that Word2Vec uses to learn word embedding. The first of these is the Continuous Skip-Gram Model. The other method is the CBOW, as known as the Continuous Bag of Words model. Since these are the most popular models for word embedding, we have already explained them at the beginning of our article. So, let’s get back to know Word2Vec a little better now.

Word2Vec models define context through a window of neighboring words. Therefore, it learns the contexts derived from these definitions on the basis of words. You can think of this window as a configurable parameter. There is also an important dynamic that has an impact on the vector similarities it derives from contexts. This dynamic is the size of the sliding window.

Word Embeddings, In Short

With the development of NLP, the importance of word embeddings models increased. The evolution of algorithms with advancing technology enables models of word embeddings to yield more successful results with each passing day. In this article, we first explained to you what word embedding is. Then, we have talked about the purpose and benefits of word embeddings. We have also tried to explain how the word embedding algorithm works by examining the matrix. Finally, we introduced the most popular word embedding algorithms for you.

Frequently Asked Questions About

These are prediction-based embedding and frequency-based embedding.

It stands for Continuous Bag of Words.

The most popular of these is tokenization. The others are one hot encoding and term frequency-inverse document frequency.

LSA is a matrix factorization technique. It stands for Latent Semantic Analysis.

They learn vector synonyms and vector words through machine learning.

No comments to show.