Today’s article will be dedicated to SEO (Search Engine Optimization) strategists. We are going to refresh our memories about one of the central operations of SEO, namely log file analysis. By the way, when was the last time you’ve conducted one? Hopefully, not too long ago. In effect, it should be a mandatory part of your routine. Reasons? How about a better understanding of search engines and their crawlers? When you get this, you also know how to make the best structural changes on your website. As a result, you can increase your chances of indexability along with gaining higher ranking and greater traffic.

We will first define the main characteristics of log analysis. Then we will have a closer look at the main advantages and recap some tips for an effective analysis.

Definition Of Log Analysis

A log file analysis is an SEO-oriented practice that investigates the relationship between web crawlers, sites, and servers. It essentially relies on computerized ensembles of data known as logs (recognizable through the ‘.log’ file extension). These are like archives that store time-stamped records of the events occurring within a given server. That’s why we also hear the term log data analysis, which refers to the inspection of such archives. Whenever a request is made by users, but also by bots, the server produces a row. What each row does is record each specific request and all the relevant elements of information (IP, date, response time, etc.). Different formats are possible (NCSA, IBM Tivoli, W3C, Websphere, custom, etc.). Here’s a fictitious NCSA Common log file example:

125.125.125.125 – inflatedmug [03/Mar/2026:15:05:25 +0300 ] “GET /index.html HTTP 1.0” 200.1048

You can recognize elements like the username, date, time, request, status code, bytes.

What Are The Potential Benefits?

It wouldn’t be exaggerated to claim that log file analysis is one of the cornerstones of SEO. It might not be the only form of analysis SEO experts need or an SEO-exclusive task. Nevertheless, it comes with a set of advantages. Below we are giving an overview. Some of the points mentioned will be further developed in the next section.

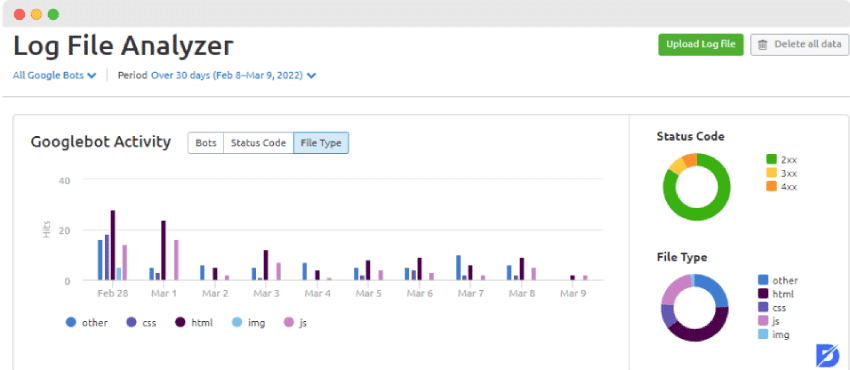

It Helps To Understand How Bots Work

Many would probably agree that it’s not always easy to grasp the internal mechanisms of web spiders. An in-depth investigation of log files provides valuable information about the frequency, duration, and scope of a crawling process. Let’s zoom in, for example, on the crawl frequency. File analysis can make you spot any possible increase or decrease in bot visits. You can thus see how your website is doing and make some changes if necessary.

It Pinpoints Problematic Elements

You get a clearer view of the way resources are used while also detecting any possible crawl budget waste. One of the most annoying scenarios is when bots encounter too many obsolete pages on your site. Log files are also here to make you better monitor your budget. Instead of wasting it, you become able to prioritize its destinations.

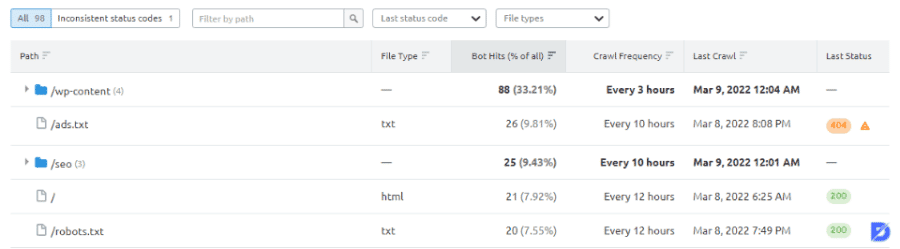

It Discloses Errors In Status Codes

No website is 100% exempt from errors. Bots are ‘smart’ enough not to consider each of them as big bugs and penalize you systematically. However, too many 404 or 500 can become alarming. The same goes for 302 redirects. A thorough analysis can help you take corrective initiatives about those.

It Determines Traffic-Generating Pages

You may also analyze the effectiveness of your previous decisions by viewing the pages that bring organic traffic to your site. Furthermore, an analysis of those pages can reveal finer details and required adjustments.

Components to Track During a Log File Analysis

Actually, there are many components to track, note down, and possibly improve. We won’t enumerate all of them but rather keep it more generic. Otherwise, our article would be likely to become an encyclopedia. More seriously speaking, the following points might be useful for any SEO practitioner:

Obsolete and Defective Pages

We start with these because there’s hardly anything more sneakily sabotaging for your website. Such pages ruin your efforts, encroach on your crawl budget, and damage your domain authority. Among them, we have pages with zero value (also known as dead or zombie pages). It’s basically as if they were long-forgotten by both visitors and bots. The thing is, there are still internal links redirecting to them in most cases. The simplest solution is to remove them, as well as all the related links. 404 or 410 status codes would be helpful when implementing those changes. However, if you think that the pages in question can still be useful, then opt for a noindex directive. Similarly, set a nofollow directive for the related internal links.

Another category of problematic pages is orphan ones. They may not necessarily be defective in terms of content quality or relevance. It’s just that they are treated like ghosts because there are no internal links pointing to them. During a log file analysis, you can spot them by browsing all the non-indexable pages and unlinked URLs. Then you have the possibility to reintegrate them by redesigning your site structure. Updating their content and assigning them internal links is also recommended. Additionally, you may rehabilitate them through a 301 redirect toward relevant pages.

Crawl Budget

That crawl budget we keep talking about is definitely something to watch out for while conducting a log file analysis. You can get at least a partial idea of your budget by checking the crawl stats in Google Search Console. An alternative way is to utilize the ‘Googlebot’ user-agent along with the proper Google IP.

You probably want bots to crawl only your best pages, right? Unfortunately, bots can get caught in some irrelevant parts of your site, all the more if there are configuration issues. One of the annoying examples is when they spend much time crawling your assets. What’s the point of losing budget on elements that you change only once in a while? So you may want to check your cache-control HTTP headers.

Verify also whether you are overusing redirects (301, 302, etc.). Depending on the analysis results, you may have to update the links and orient them toward appropriate URL destinations.

Don’t forget that bots appreciate clear commands. So don’t hesitate to add more noindex directives when necessary. You can also get help from robots.txt files. Make sure to use them with moderation as they often tend to restrict bots’ actions.

Skipped Elements

Understand it as ‘skipped by Google and its bots.’ Well, this can mean several things. There may be, for example, indexable pages that Googlebot keeps ignoring lately. Your log files can give you the last crawling dates. This would allow you to sort your pages by date and set an order of priority. Then you can start updating the content of those on the top of your list. Make sure once more that they are on your XML sitemap too. Building internal and external links to those pages is also an effective way to bring them back to Google’s attention.

Now here’s a parallel problem that occurs quite frequently. Google may be prioritizing and crawling too many of your pages. A log file analysis can make you notice that you’re creating your pages as if they were identical. From an SEO perspective, this may not always be a good idea. Indeed, you should highlight the pages that are the most relevant to your business or project. How? Again through links, but only to the most important sections. You may also need to readjust your site structure to increase the visibility of the top priority pages.

Log File Analysis in Brief

A log file analysis is one of the best allies of your overall SEO strategy. It makes you get abundant information about your website’s internal state. You become able to spot and correct any issue much more quickly while learning to anticipate bots’ actions. As a consequence, you are better equipped to maintain a fresh and responsive website. All this can undoubtedly increase your organic traffic and open your way up to the top of SERPs.

Frequently Asked Questions About

Yes, it is. Keep in mind, though, that commands are likely to vary from one operating system to the other. So make sure to refer to the guidelines specifically provided for the brand you are using.

You may use Console to locate them. Check the sidebar to see if the Log Reports are visible. If yes, select them. If not, just click on the Sidebar button. Make your file selection. Then the steps are as follows: File > Reveal in Finder. You will get a Finder window displaying the selected file.

The system does not always record the changes instantly. Give it some time. Make also sure that you haven’t omitted anything or forgotten to confirm your latest modifications.

There are a bunch of reliable and effective file analysis tools available on the market. ELK Stack, Logstash, Graylog, and GoAccess are some of the good open-source options. They can allow you to analyze logs on clouds, customize your dashboard, generate reports in several formats, etc.

This is quite normal. When you remove pages without redirection, they are likely to become 4XX. This is like a necessary transition period until Google adjusts itself to the changes on your site.

No comments to show.