In the crossroads of web development and search engine optimization (SEO), a new player has emerged: JavaScript SEO. But what is JavaScript SEO? At its core, it refers to the practices and techniques aimed at ensuring websites with JavaScript-driven content are properly indexed and ranked by search engines. Given the rising prominence of dynamic, JavaScript-based web applications, this area of SEO cannot be overlooked. Dive into this article to understand the nuances of JavaScript SEO, its importance in today’s digital landscape, and actionable steps to ensure your JS-powered site shines in search results.

In concrete words, JavaScript SEO is the branch of SEO or site optimization in search engine results, which focuses exclusively on optimizing said sites but is programmed in JavaScript to ensure that search engines crawl them and index them correctly, as would happen with other sites.

Likewise, search engines such as Google continue to optimize their engines further on this issue, an example being the recent announcement that they say that they finally updated their web rendering service based on the latest version of Chromium, now supporting many modern JavaScript features.

What Is JavaScript?

JavaScript is a language for programming used to make web pages interactive and dynamic. Experts use it for comments or AJAX recommendation widgets, for example. JavaScript operates on the visitor’s computer and does not need constant site-side downloads.

On the other hand, since JavaScript is an interpreted language, it does not require any particular program to create usable code. A simple text editor is sufficient to write in JS. It is also the only scripting language with support from all web browsers supporting client-side scripting.

Consequently, we can say that JavaScript SEO means building a website with JavaScript for high rank. It can be evaluated under the title of technical SEO. JavaScript SEO is mainly used for creating a website with high performance and building remarkable websites as SEO-friendly. The important thing is that if you want to use JavaScript SEO, you should focus on the experience of users and search engines for the best performance.

JavaScript SEO Today

JavaScript SEO is undoubtedly a very hot topic nowadays. More and more websites use modern JavaScript frameworks and libraries such as Polymer, Angular, React, and Vue.js, thanks to its possibilities and innovative aspects regarding programming and tools.

Despite that, the reality is that SEO and developers are still at the beginning of a journey to make modern JavaScript frameworks successful in search, even with Google and other search engines already having that interest from previous years but that It shows that it is far away, as popular sites based on this technology and platform are failing in search engines.

This article will explain why this could happen and, where possible, offer the ways around it and solutions you need to better optimize JavaScript sites for search engines, especially with a focus on Google. These are the main points to address:

- How to ensure that Google and its algorithm, crawlers, and robots can correctly render a website

- How to see a website in the same way that Google sees it

- What are the most common errors regarding JavaScript SEO, and why do they occur?

- What does it mean that Google is getting rid of the old Ajax Tracking Scheme?

- Which is better: Pre-rendered, SPA, or isomorphic JavaScript?

- If it is correct to detect Googlebot by a user agent and deliver pre-processed content with HTML and CSS

- If other search engines like Bing are capable of JavaScript

Can Google Track and Process JavaScript?

Google had claimed they are quite good at rendering JavaScript websites, at least since 2014 when they published the post making such a claim. The point is that despite that, the developers behind the search engine have advised caution regarding this “issue” of JavaScript pages, as can be seen in the following excerpt in more detail:

“Sometimes things don’t go perfectly during processing, which can have a negative impact on your site’s search results. JavaScript may be too complex or arcane to run, so it is impossible to display the page entirely and accurately.”

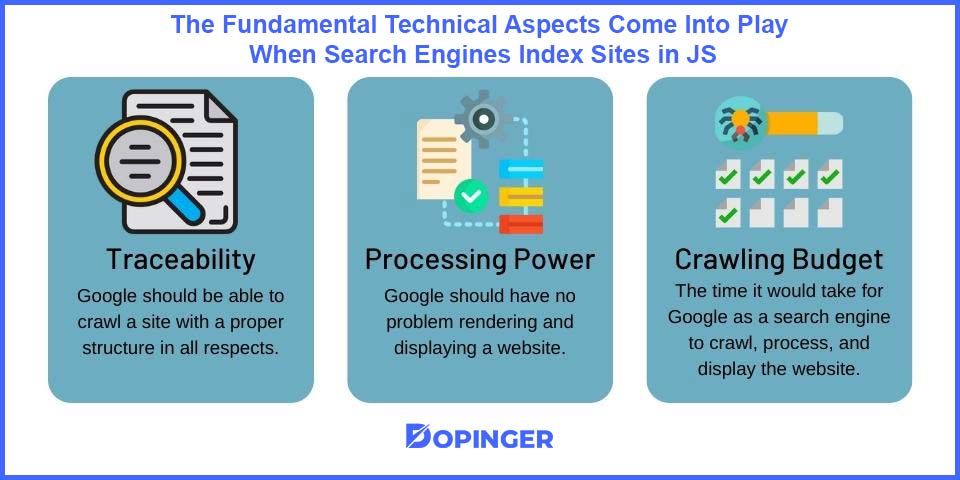

In detail, three fundamental technical aspects come into play when search engines index sites in JavaScript:

- Traceability: Google should be able to crawl a site with a proper structure in all respects.

- Processing power: Google should have no problem rendering and displaying a website.

- Crawling budget: the time it would take for Google as a search engine to crawl, process, and display the website.

Client and Server-based Processing

As you look at whether Google can crawl and process sites in JavaScript, two fundamental concepts must almost be addressed. Client-based and server-based processing. Therefore, all SEO professionals must understand these two aspects if they want to optimize these types of online platforms.

First, in the traditional server-based rendering approach, a browser or Googlebot receives an HTML that fully describes the page. The copy of the content is already there, so the browser or Googlebot only needs to download the CSS and display screen content. Search engines generally have no problem with server-based rendered content as it is traditional and has been based on almost the entire web and its operation.

On the other hand, the increasingly popular approach to client-based processing is slightly different, and search engines, unfortunately, struggle with such a less traditional type of targeting. Here, it is quite common that a browser or Googlebot gets a blank HTML page or a copy with very little content on initial load. So, at this point, “the magic” happens, and JavaScript asynchronously downloads the copy of the content from the server and updates its screen, being part of the technology differences with other website programming languages such as HTML.

Therefore, if you have a website with client-based processing, always ensure that Google can track and render it correctly since JavaScript is very sensitive to errors. For example, HTML and JavaScript platforms are different when it comes to error handling, and a single error or mistake in your code will make Google unable to display your page, in both cases but always being much more critical on sites built in JS.

The Dynamic Rendering / Prerendering Method

This method sends the content rendered on the client-side to the users while the search engines get the content rendered on the server-side. So your site dynamically detects whether it is a search engine request. And for those who are wondering, no, it’s not considered cloaking because the content must be ISO.

Google no longer recommends using escaped fragments or the push state method. The pre-rendering implementation tools: Prerender.io, BromBone, and PhantomJS, result in a static cached version of your pages.

SEO JavaScript for Lazy-loaded Images

There are at least two great reasons to consider lazy-loading images for your website:

- If your website uses JavaScript to display content or provide it with some functionality for users, loading the DOM quickly becomes critical. It is common for scripts to wait until the DOM’s load is full before executing. On a site with many images, lazy loading – or loading images asynchronously – can make the difference between users staying or leaving your website.

- Most lazy loading solutions work by loading images only if the user has scrolled to the location where the images would be visible in the viewport. Therefore, those images will never be loaded if they never get to this point. This means huge savings in bandwidth. Thus, most users, especially those accessing the web on mobile devices and with slow connections, will thank you. Slow loading of images helps website SEO performance. Implementing your lazy loading solution shouldn’t be a problem if you use JavaScript.

The Best JavaScript Frameworks for SEO

Among the frameworks, some natively have the functionality of a server-side HTML rendering for search engines: React and Angular 2.0. Other frameworks must work with a third-party pre-rendering service.

Angular and React

Newer versions of Angular (4 with Universal) and ReactJS have server-side rendering capability available, bringing several additional benefits. Upgrading to the latest version would be the perfect solution to avoid Ajax’s classic SSR rendering. This ensures that all search engines, social media, etc., can consistently and accurately read your site’s content.

React + NextJS

Since its inception, React JS has supported server rendering. It used to be called Application Universal. Today it is an SSR (Server Side Rendering) Application. We can, therefore, always make a React JS Web App on the server-side.

The difficulty comes from external requests to retrieve the data. Therefore, the calls are asynchronous, and it is necessary to manage the reception of responses before returning the application. It would be best if you could also manage the libraries to be SSR compatible.

Next, JS is ideal if you want to set up a powerful web application that search engines can index. Next, JS uses the next / head library instead of react-helmet to manage the Title and Description meta tags. Don’t panic; it is an implementation in the same way. The equivalent for Vue JS is Nuxt JS.

Common Mistakes With JavaScript Websites

When you build sites in JavaScript, you tend to make certain common technical and technical mistakes in search engine optimization or SEO. Here’s a list of those common mistakes to make it much easier to avoid.

Blocking JavaScript and CSS Files for Google Robots

Since Google robots can crawl JavaScript and render content, ensure that the internal and external resources necessary for processing are not unavailable for such robots.

Use the Google Search Console

The recommendation regarding this platform is that if you see a significant drop in ranking for a robust website, you should check the Fetch and Render section. Generally, it is good practice to use Fetch and Render on a random URL sample from time to time to ensure that a website is rendered correctly.

Focus on the “onclick” Event

We must remember that Googlebot is not a real user in an obvious way, so you have to assume that you do not click, do not fill out forms, or carry out any process as you would with a real user. In reality, this has many practical implications, although only two are available below:

If you have an online store and the content hidden under the “show more” button does not appear in the DOM before clicking, it is a clear sign that Google will not see it. Important note: It also refers to menu links on faceted pages.

All links must contain the “HREF” parameter without exception. If a person only uses the OnClick event, Google will not collect these links, and it will not take them for indexing and processing. If you’re unsure about the links and whether or not Google will take them, here’s what John Mueller said about it.

JavaScript SEO in Short

The JavaScript SEO branch is not sure if Google treats and ranks JavaScript websites and HTML sites with hints and ideas that only offer experiments that go through various performances. With this knowledge, it is clear that SEOs and developers are beginning to understand how to make modern JavaScript frameworks trackable. Therefore, it is important to remember that there is no one-size-fits-all rule.

Every website is different. If you plan to build a JavaScript-rich web platform, it’s best to make sure you work with developers and SEOs. In JS, they generate traffic and visits and can serve the success of a company, business, or organization.

Frequently Asked Questions About

Yes, JavaScript is supported by all web browsers that support client-side scripting.

There are two fundamental concepts: client-based and server-based processing.

In general, search engines do not have a problem with server-based rendered content. The reasons for that are traditional and are based on almost the entire web and its operation.

Google no longer recommends the use of escaped fragments.

Using Fetch and Render on a random URL sample from time to time ensures that a website is rendered correctly.

No comments to show.